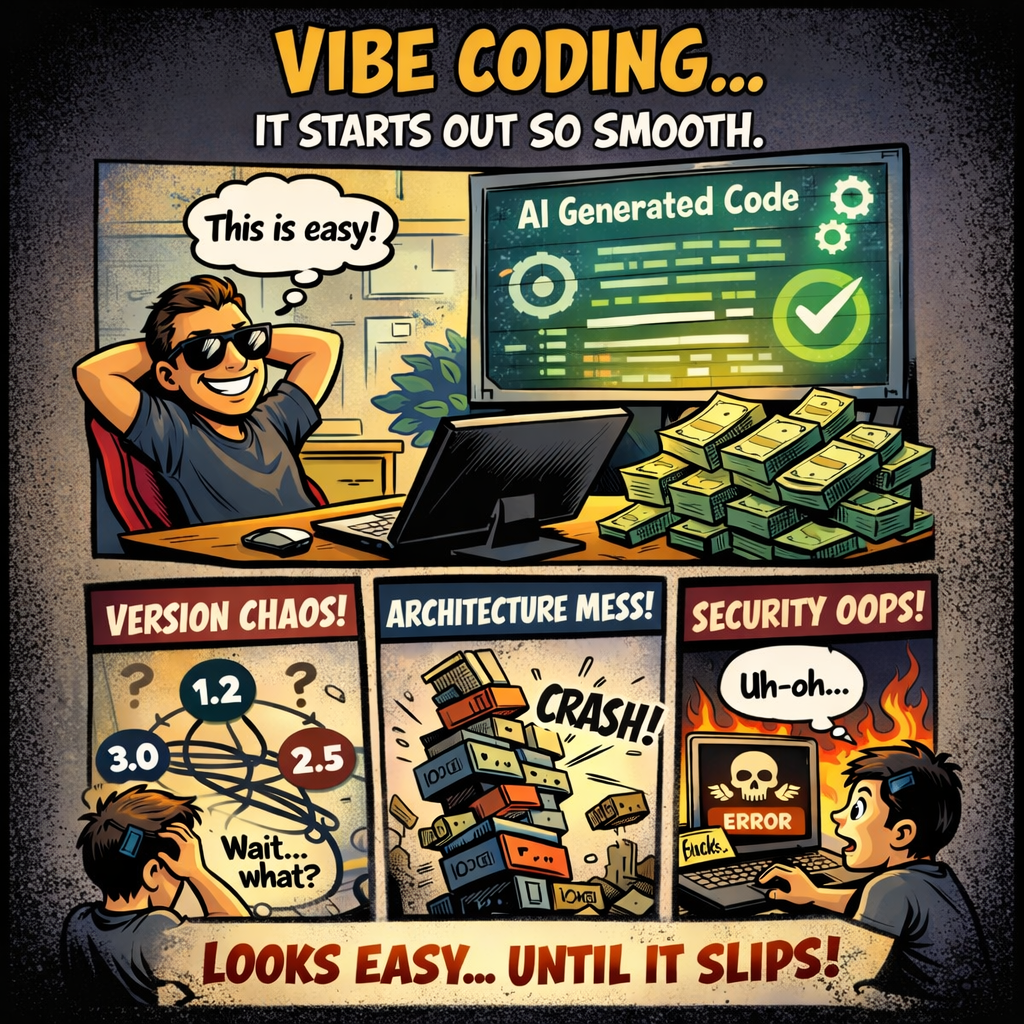

There is a growing belief that software engineering has become an optional skill and a 20-dollar subscription with the right prompts can build complex systems without understanding architecture, versioning, security, or operational reality. Engineers, according to this narrative, are a bottleneck that can be removed.

I am skeptical of claims like these, but I do not consider dismissal without evidence a serious position. Instead of arguing in the abstract, I tested the approach myself under real constraints, using a realistic technology stack and enough complexity to move beyond toy examples. What follows is not a rant, but a technical postmortem.

The Experiment

I tried what is commonly called vibe coding using both ChatGPT and Gemini Pro. Not as autocomplete assistants, but as primary drivers of implementation decisions.

The system itself was intentionally not complex and far smaller than what many vibe coding demos claim to produce, but it was realistic enough to expose problems that only appear once real technologies are involved, such as SQL dialects, hyperscaler specifics, and architectural decisions. So here is my setup:

A Spring Boot application with a PostgreSQL database, packaged in Docker, deployed to AWS using App Runner and Aurora, with a GitLab CI/CD pipeline. The application itself was a CRUD-style system storing flight bookings, hotel reservations, and rental car bookings, including domain attributes such as airline, flight number, ticket numbers, and related metadata. A Vaadin UI was used for the frontend.

Without these boundaries, the output quickly became meaningless, producing recommendations no experienced engineer would ever seriously consider. As an example, suggesting an Oracle database for this setup would have been entirely disproportionate, comparable to setting a house on fire just to roast a chicken.

I therefore defined the technology stack, the programming language, and the deployment model upfront. And once these constraints were in place, I dialogued forward by describing domain and feature requirements step by step. These sessions became fairly long, to the point where the browser occasionally struggled to update the page, yet splitting the work across multiple chats caused essential context to be lost, resulting in ambiguous instructions and inconsistent outcomes. More on that later in the experiment.

First Impressions: Polite, Confident, and Encouraging

Both ChatGPT and Gemini behave like extremely polite people pleasers. Every idea is treated as a good idea, every observation is acknowledged as valid, and every concern is met with reassurance. It feels a bit like having a personal cheerleader pushing you forward at all times. At first glance, this creates a strong sense of productivity. Large amounts of code appear quickly, hundreds of lines within minutes, infrastructure scaffolding materializes, Terraform definitions look plausible, project structures are bootstrapped almost instantly, and even Vaadin UI drafts emerge with minimal effort.

For Terraform in particular, this approach worked surprisingly well, as long as the complexity remained low and I consistently reminded the system of the chosen hyperscaler and other constraints in every prompt. Still, something started to feel off rather quickly. From the perspective of an engineer with more than twenty years of experience, the process felt like eating soup with a fork. You are technically consuming something and receive constant positive feedback, yet the result is unsatisfying and inefficient. Before diving into where this approach breaks down, it is worth looking at what actually worked.

Where It Actually Worked

To be fair, there are areas where this approach performs genuinely well.

Terraform generation for basic setups worked reliably, bootstrapping a Spring Boot project structure was convenient, and UI scaffolding for Vaadin provided a reasonable starting point, even though I would still rely on official tooling for anything related to layout or styling. Even test generation worked to a certain extent, although it was hard to ignore the fact that two of the generated tests effectively ended with an assert true;.

For quick experiments and exploratory work, especially when the goal is to validate an idea rather than to build a system, vibe coding is fast and convenient and significantly lowers the barrier to getting started.

That, however, is also where its usefulness largely ends.

Where Engineering Starts to Break Down

The first real cracks appeared around dependency management. Many engineers, myself included, tend to sigh when Maven dependency management comes up, yet it is still familiar territory. We know which CVE belongs to which library, which issues have been fixed, and when it makes sense to deliberately stay on a slightly older version to maintain compatibility or avoid new security problems.

In contrast, both models consistently selected libraries that were released years ago while ignoring more recent and actively maintained versions. A concrete example was the Java Persistence API, which was renamed to Jakarta Persistence in 2019. It took several rounds of feedback before I explicitly pointed this out, as I wanted to see whether engineering knowledge would emerge implicitly. Once mentioned, the observation was enthusiastically acknowledged as a „great idea.“ The concept of a Bill of Materials, particularly Spring Boot’s dependency management model, was also ignored unless explicitly demanded. This is ultimately a limitation of statistical pattern matching, not an understanding of software evolution.

SQL turned out to be another area where the limitations became visible very quickly. Although the target database was PostgreSQL, the generated queries frequently drifted into other dialects, most notably MySQL and Oracle. Syntax such as AUTO_INCREMENT, LIMIT semantics, or vendor-specific date and sequence handling appeared in places where PostgreSQL expects different constructs, even though the database choice had been stated explicitly. These are not cosmetic differences but behavioral ones that affect correctness, performance, and portability. Correct SQL in real systems is shaped by the exact database engine, its planner, its extensions, and its operational characteristics, none of which are consistently modeled when generation is driven by statistical similarity rather than an explicit understanding of the execution environment.

Over time, methods grew longer while the logic became increasingly repetitive. Earlier design decisions were forgotten as sessions progressed, most likely due to the finite context window, and variable names began to change without any clear rationale. When I encountered „not found“”“ errors and raised them, new variables were sometimes introduced instead of reconciling the existing ones. More concerning, established patterns such as separation of concerns gradually blurred, and what initially resembled a layered architecture slowly eroded into tightly coupled code.

None of this happened because the tools are incapable or „stupid“. It happened because they do not maintain a coherent internal model of the system over time. Large language models operate on a bounded context window that contains a rolling snapshot of recent interactions, not a persistent representation of architecture, invariants, or design intent. Earlier decisions only exist as text tokens, and once they fall out of the active context or become diluted by later instructions, they effectively cease to influence generation.

As a result, the model optimizes locally rather than globally. Each response is generated to best satisfy the most recent prompt, even if that contradicts earlier architectural choices or implicit constraints. There is no internal mechanism that enforces consistency across layers, tracks dependencies, or preserves invariants such as naming conventions, abstraction boundaries, or dependency management strategies. What looks like memory is pattern continuation, not architectural reasoning.

From an engineering perspective, this is fatal. Software systems do not fail because of isolated lines of code, but because of gradual erosion of structure, contracts, and assumptions over time. Without a stable internal model of the system, long-running coherence is impossible, and the longer the interaction continues, the more likely earlier decisions are silently undone rather than deliberately evolved.

That kind of judgment, deciding which dependencies belong in the system, which versions are acceptable, which layer a method belongs to, and which architectural constraints must not be violated, is not something vibe coding provides. It comes from engineering experience.

The Core Problem: Confidence Without Accountability

Both tools were consistently confident in their output. Explanations sounded plausible, the generated code appeared clean at first glance, and even infrastructure definitions looked correct on the surface. Confidence, however, should not be confused with correctness.

The most problematic failure mode is therefore not obvious errors, but solutions that look reasonable while being subtly wrong. Detecting these issues requires experience and contextual knowledge, which makes this approach particularly risky for users without an engineering background. Even where the tooling performed comparatively well, such as Terraform generation, the limitations became visible quickly. Database setups repeatedly introduced a NAT Gateway by default, despite more cost-efficient and simpler alternatives being available, and while App Runner was used as instructed, the chosen CPU and memory configurations were often wildly oversized. None of this is catastrophic in isolation, but if left unchecked, the result is an architecture that is technically impressive, operationally questionable, and expensive enough to spark sudden interest from the CFO.

When systems built this way drift toward production, the issue is no longer just code quality. It becomes a question of invisible risk, including security exposure, license compliance problems, operational fragility, and long-term maintenance debt without clear ownership.

Vibe coders do not ship. They slip.

Why Assisted Coding Is Different

An important distinction is often overlooked in these discussions. Assisted coding tools are designed to reduce repetition, accelerate low and medium complexity tasks, and provide support when working through more complex problems. In these cases, the engineer remains responsible for structure, constraints, and decisions, while the tool helps with execution and recall.

Vibe coding follows a different model. Instead of supporting judgment, it implicitly attempts to substitute it by generating end-to-end solutions without maintaining an understanding of architectural intent or long-term consequences. This difference explains why tools such as Cursor or tightly integrated copilots can be effective in professional environments, while pure vibe coding struggles beyond exploratory use cases. One approach reinforces expertise, the other assumes it away.

Who Is Actually at Risk

The greatest risk arises for those without a solid engineering background. This includes founders who treat production systems as slightly extended demos, managers who equate short-term velocity with meaningful progress, and consultants who mistake generated output for genuine understanding. In practice, this often leads to systems being deployed without a clear ownership model, incomplete threat assessments, or a realistic plan for operation and maintenance.

These gaps tend to surface later as familiar incident patterns rather than immediate failures. Examples include security vulnerabilities introduced through outdated or incompatible dependencies, unexpected cloud cost explosions caused by default infrastructure choices, data integrity issues due to subtle SQL dialect mismatches, and systems that become effectively unmaintainable once the original generator or prompt history is gone. The risk is therefore not theoretical, but operational. Enterprise IT systems are rarely compromised by a lack of tools, but by the absence of accountability for the decisions those tools implicitly make.

A Clear Boundary

Vibe coding can be useful when the goal is to quickly explore or validate an idea without long-term expectations. It becomes irresponsible once the output starts to drift toward production use without proper engineering review. When systems built this way reach production environments, it usually indicates that responsibility for architectural decisions and risk assessment has broken down somewhere along the chain.

Final Thoughts

I did not run this experiment to argue that AI is useless. On the contrary, when applied with clear boundaries and proper oversight, it can be a powerful accelerator that reduces friction and amplifies productive work. The same mechanisms, however, become a liability amplifier when used without judgment, context, or accountability. Engineering is not the act of producing code, but the continuous process of making decisions under technical, operational, and organizational constraints. No subscription, regardless of how capable the tool behind it may be, replaces that responsibility.