AI in Quantitative Investing: Limits of Autonomous Stock Picking Systems

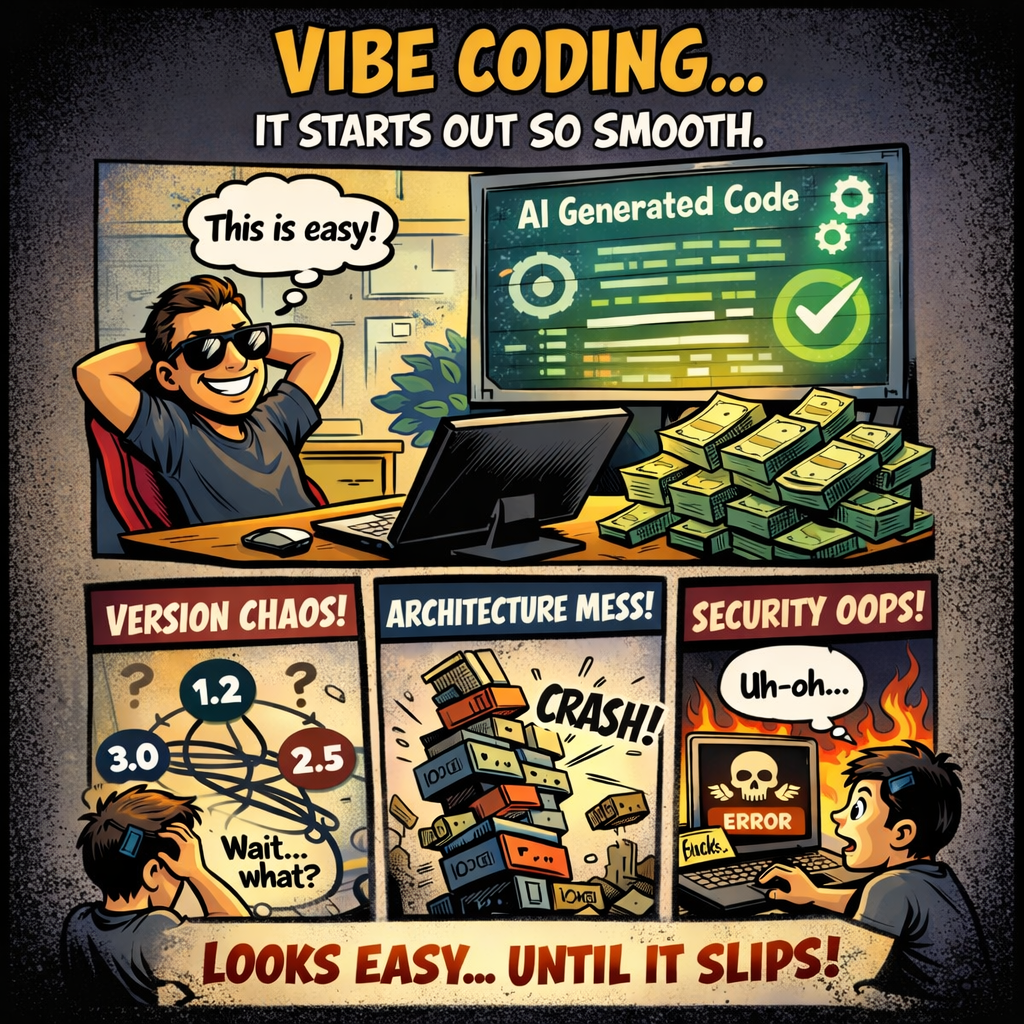

AI-driven stock picking agents are often presented as the next step in quantitative investing. The narrative is compelling: autonomous systems ingest market data, reason over it, and continuously improve decisions through feedback loops. In theory, this aligns well with modern machine learning paradigms and agent-based architectures. In practice, the situation is more constrained. These systems […]

AI in Quantitative Investing: Limits of Autonomous Stock Picking Systems Weiterlesen »